I’ve watched too many startups waste months building Kubernetes platforms they don’t need. Smart engineers, good intentions, bad outcomes.

They read about how Netflix runs on Kubernetes. They see job postings requiring K8s experience. They assume that’s where they need to be. So they spin up EKS, spend weeks figuring out networking, fight with Helm charts, and eventually get a deployment working.

Six months later, they have one service running on a cluster that costs more than the AWS bill it replaced. The engineer who set it up left. Nobody knows how to debug it.

I’ve seen this pattern dozens of times. It’s predictable. It’s preventable.

What Kubernetes Actually Solves

Kubernetes solves coordination at scale. When you have hundreds of services, thousands of containers, and teams that need to ship independently, Kubernetes provides:

- Declarative infrastructure that version controls

- Service discovery without hardcoded addresses

- Automatic scaling and self-healing

- Resource isolation between teams

- A common deployment interface regardless of what’s underneath

These are real problems. At scale.

If you have five services and three engineers, you don’t have these problems. You have different problems.

The Real Startup Problems

Early-stage startups struggle with:

-

Shipping fast enough. Features need to get to users. Every hour spent on infrastructure is an hour not spent on product.

-

Debugging production issues. When something breaks at 2am, you need to understand what happened. Quickly.

-

Keeping costs predictable. Cloud bills surprise you. You need to understand what you’re paying for.

-

Onboarding new engineers. New hires should ship code in their first week, not spend it learning your deployment system.

Kubernetes makes all of these harder, not easier, for small teams.

The Hidden Complexity

“But Kubernetes is just YAML,” people say. “It’s not that complex.”

Let’s count the things you need to understand to run a production Kubernetes cluster:

- Cluster networking (CNI plugins, service mesh, network policies)

- Ingress controllers and load balancing

- Certificate management

- Secrets management

- Storage (PVCs, CSI drivers, storage classes)

- Resource requests and limits

- Pod security

- RBAC

- Monitoring and observability

- Logging aggregation

- Autoscaling (HPA, VPA, cluster autoscaler)

- Node management

- Upgrades and maintenance

That’s a partial list. Each of those items has its own learning curve, failure modes, and operational overhead.

A managed Kubernetes service (EKS, GKE, AKS) handles some of this, but not all. You still need to understand networking, ingress, storage, and security. You still need to maintain and upgrade.

For a team of three engineers trying to find product-market fit, this is insane overhead.

What to Use Instead

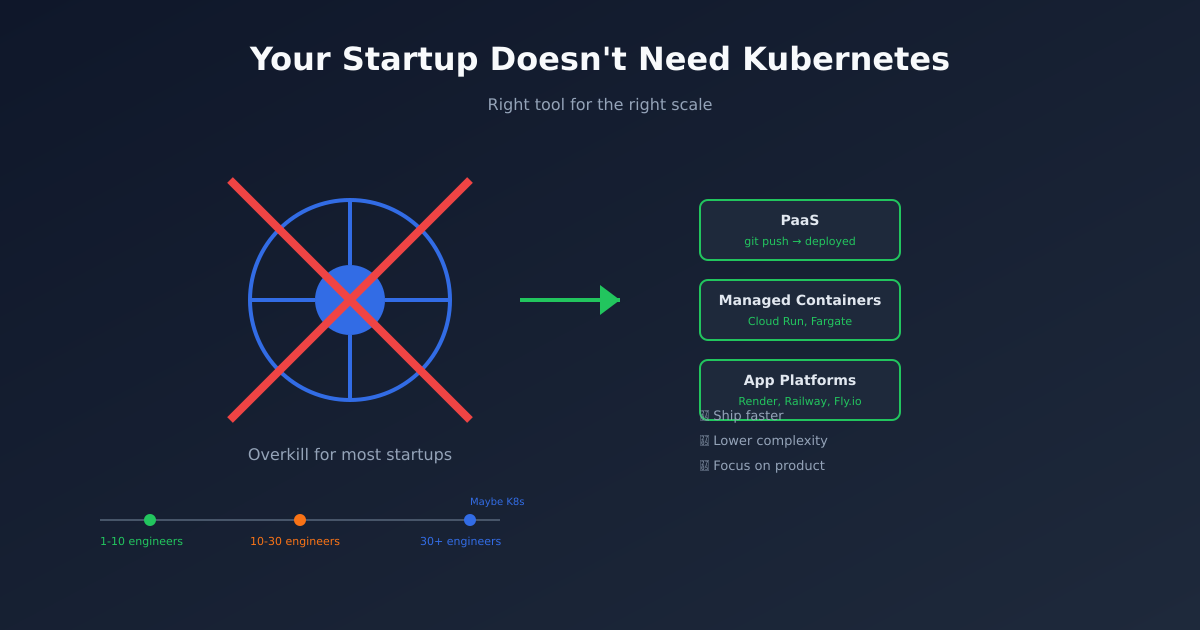

Here’s my recommendation ladder for startups:

0-10 engineers: Platform-as-a-Service

Use Render, Railway, Fly.io, or Heroku. Deploy with git push. Get automatic TLS, custom domains, and reasonable scaling. Focus on your product.

Yes, it’s more expensive per compute unit. You’re paying for operational simplicity. That’s a good trade when engineering time is your scarcest resource.

10-30 engineers: Managed containers without orchestration

AWS App Runner, Google Cloud Run, Azure Container Apps. You get containers without the orchestration complexity. Each service is independent. Scaling is automatic.

If you need more control, ECS with Fargate is a good middle ground. You get task definitions and service discovery without the full Kubernetes abstraction.

30+ engineers: Maybe Kubernetes

At this scale, you probably have:

- Multiple teams shipping independently

- Complex service dependencies

- Platform engineers who can own the infrastructure

Now Kubernetes might make sense. But even then, question whether managed services could do the job.

Signs You’re Not Ready

You’re not ready for Kubernetes if:

-

You don’t have a dedicated platform team. Someone needs to own the cluster. If that’s a fraction of one engineer’s time, you’ll have a neglected, brittle system.

-

Your services are tightly coupled. If deploying service A requires deploying service B, you don’t have the architectural independence that Kubernetes is designed for.

-

You’re still searching for product-market fit. Your product will pivot. Your architecture will change. Don’t lock in infrastructure decisions before you know what you’re building.

-

Your team doesn’t have Kubernetes experience. Learning Kubernetes in production is painful. You’ll make mistakes that cause outages.

-

Your traffic is predictable. If you know how much capacity you need and it doesn’t change much, you don’t need sophisticated autoscaling.

Signs You Might Be Ready

Consider Kubernetes if:

-

Multiple teams need to deploy independently. Kubernetes provides isolation and common interfaces that help teams stay out of each other’s way.

-

You have >50 services. At this scale, manual coordination breaks down. Declarative infrastructure becomes essential.

-

Your traffic is highly variable. Black Friday traffic spikes, viral moments, unpredictable demand - autoscaling at the container level helps.

-

You need multi-cloud or hybrid deployments. Kubernetes provides a consistent interface across environments.

-

You have platform engineers who want to build on it. Kubernetes is a foundation for building internal platforms. If you’re investing in that, the complexity is worth it.

The Career Angle

I know why engineers push for Kubernetes at early startups. It’s on every job description. It’s what “modern” companies use. Not having K8s experience feels like a gap in your resume.

I get it. But optimising your company’s infrastructure for your resume is backwards. And honestly, managing a single EKS cluster at a startup isn’t impressive K8s experience anyway.

If you want Kubernetes experience, contribute to open source. Build a homelab. Take a course. Don’t use your employer’s limited runway as a learning opportunity.

The Sunk Cost Trap

Some of you are reading this with a half-built Kubernetes setup. You’ve invested months. Walking away feels wasteful.

Do the math anyway.

How much time will you spend maintaining this cluster over the next year? How many incidents will trace back to infrastructure complexity? How many features won’t ship because engineers are fighting with deployments?

Compare that to the migration cost of moving to something simpler.

Sometimes the right move is to cut your losses. A migration that takes two weeks but saves ongoing pain is worth it.

A Better Approach

If you’re early stage and want to do this right:

-

Start with PaaS. Seriously. Render or Railway. Ship features.

-

Containerise early. Even on PaaS, run containers. This keeps your options open.

-

Document dependencies. Keep a clear picture of what talks to what. This makes migration easier later.

-

Monitor costs and pain points. When PaaS costs become unreasonable or limitations hurt, you’ll know it’s time to migrate.

-

Plan the migration before you need it. Know what Kubernetes migration would look like. Document the steps. Don’t execute until you’re ready.

-

Hire platform engineers before migrating. Get people who’ve done this before. They’ll make better decisions than you will.

The Nuance

I’m not saying Kubernetes is bad. It’s an incredible piece of technology that solves real problems.

I’m saying it’s a tool for a specific context. Large scale, multiple teams, complex orchestration needs. If that’s not your context, simpler tools exist.

The best infrastructure is invisible. Developers push code, it runs in production, users are happy. How that happens matters less than whether it happens.

For most startups, the path to invisible infrastructure runs through managed services and PaaS, not Kubernetes. Accept the constraints, embrace the simplicity, and ship your product.

Kubernetes will still be there when you need it.